Priors For Cognitive Enhancement

Adderall, ADHD, And Focus: Much Much More Than You Wanted To Know From Whom You Didn't Want To Know It From

A Declaration of Bias

I was diagnosed with ADHD as a teenager. I was prescribed Ritalin but rarely took it. I found it aversive. I also found it dubious that I amongst my peers would be the one to get diagnosed. By my estimate they were largely incapable or unwilling to read books, an activity I often found pleasant. And the books I read, such as One Flew Over the Cuckoo’s Nest, gave me an insider’s view of a leviathan funneling creative types into a neoliberal machine. Dissidence was illness, medicated was synonymous with submission; my Brave New World would have to wait.

And it waited until a couple years ago when I read Why We Sleep and wondered if drinking less caffeine might be good for me. The same week my much more successful friend extolled the benefits of Adderall. The synchronicity of the moment drove me to action. I poured my coffee down the drain, drove to my primary care physician, and emerged a new man.

I was riding high. Adderall was less aversive than Ritalin. In the first few days of use I experienced a mild euphoria and a vastly increased enthusiasm for small talk. Fortunately, such side effects were short lived, and soon I settled into a vague uneasiness that I could forge into the superpower marketed as focus. Life was good.

Until I moved and switched healthcare providers. My new provider was vertically integrated and required me to see an in house psychiatrist. After a 20 minute appointment I was offered a Continuous Performance Task, a test designed to be so boring as to thwart anyone with bad executive functioning. Much to my surprise (and, honestly, dismay) I passed. My doctor concluded I was “perfectly average” and not a candidate for treatment. Was my ADHD cured? Did I ever truly have it? My wife graciously offered to provide a written letter to my doctor swearing that I am, in fact, generally incompetent. Her generosity not withstanding, I was in a pickle: having finally embraced society’s medicalization, it was taken from me.

I proposed a metaphor to my doctor.

In which I create a self serving paradigm

A little story:

A young man is on a high school baseball team. He’s strong and athletic, but he’s struggling to stand out from the crowd. His father wears glasses and he questions if his own vision may be imperfect. He raises his concern to his coach, a fastidious man who keeps detailed notes. The coach crunches the numbers: “you’re hitting balls at an average rate. There’s nothing wrong with your vision. Quit worrying and play.”

This is a deceptively attractive heuristic. A perfect hitter needs perfect vision, so a nearly perfect hitter must have at least nearly perfect vision. And, someone who can’t hit the ball at all may very well be blind. But average doesn’t tell you much. I think people tend to see net average and assume subtraits like skill and vision are both average. This is possible. But exceptional skill and poor vision can yield the same average. The young man from our story should get his vision checked.

The metaphor goes deeper. At my last eye appoint my optometrist idly mentioned that my corrected vision is an especially clear 20/15. Visual acuity, I learned, is hard limited by the fovea’s cell density. Here’s an illustration lifted from Wikipedia:

Each of these dots is a photoreceptor cell, and each cell more or less has a fiber wire directly to the brain. What glasses do is correct how the light falls on these cells. For most of us, glasses are functionally perfect. Human vision remains pedestrian because packing more cells into the fovea is still science fiction.

This was an epiphany for me because well over a decade ago my friend and I got into a long argument over whether Tiger Woods getting laser eye surgery to enhance his vision was akin to steroid use and a form of cheating. You can see a contemporaneous argument laid out by Slate.

Try to approach this with an open mind:

“Natural” vision is 20/20. McGwire’s custom-designed lenses improved his vision to 20/10, which means he could see at a distance of 20 feet what a person with normal, healthy vision could see at 10 feet. Think what a difference that makes in hitting a fastball. Imagine how many games those lenses altered.

You could confiscate McGwire’s lenses, but good luck confiscating Woods’ lenses. They’ve been burned into his head. In the late 1990s, both guys wanted stronger muscles and better eyesight. Woods chose weight training and laser surgery on his eyes. McGwire decided eye surgery was too risky and went for andro instead. McGwire ended up with 70 homers and a rebuke from Congress for promoting risky behavior. Woods, who had lost 16 straight tournaments before his surgery, ended up with 20/15 vision and won seven of his next 10 events.

If we’re steelmanning, it’s objective Woods’ eye surgery was expensive, dangerous, and medically unnecessary. And medical resources were spent unnaturally enhancing adequate vision, not helping those with real medical conditions like myopia or hyperopia.

But, am I crazy or are “custom lenses” just contacts? Laser eye surgery also lacks the cyborgian je ne sais quoi it had in the mid 90s. How would the author’s attitude translate if he had been present in Italy in the late 12th century for the emergence of modern eyeglasses? Cue Thus Spoke Zarathustra

and picture old man in a dingy attic shaping lenses. Imagine the moment he gazes through them and the blurry wobrld snaps into focus. Is this a miracle? Not for our time traveling critic. In the 12th century there was not yet any hyperopia to be cured [etymology: late 19th century.] So there’s no possible medical usage. Just poor people with real problems and rich aristocrats trying to read past the age that God intended.

Unraveling the metaphor

I hope I’ve convinced you that everyone who plays baseball should get their vision checked. Whether anyone who has ever lost focus should consider Adderall is less certain.

The medicalization of attention mostly falls under the umbrella of ADHD. The disorder has a diagnostic threshold: you either have ADHD or you don’t. This is a classic disease model. For example, medicalization of strep throat can be done with a tiny flow chart: If Strep Throat → yes → take antibiotics. If Strep Throat → no → don’t take antibiotics.

But Strep Throat has a definitive causal factor: streptococcus bacteria. Nothing about ADHD is definitive. There’s no biological marker. No handful of genes. There’s no essential or universal mechanism that can be found via scan, blood, or bile. It’s rigorous diagnostic criteria are vague-isms like “lacks ability to complete schoolwork” and “diminished attention span.”

The CPT test I took that started all of this? Worthless for adults.

To get a hold on the disorder we have to seize onto the most tangible aspect of it: it’s an attention problem. The reasonable prior here is that attention is a polygenic trait affected by tens of thousands of genes. Scott from Astral Codex has priorly addressed this:

“Ability to concentrate” is a normally distributed trait, like IQ. We draw a line at some point on the far left of the bell curve and tell the people on the far side that they’ve “got” “the disease” of “ADHD”. This isn’t just me saying this. It’s the neurostructural literature, the the genetics literature, a bunch of other studies, and the the Consensus Conference On ADHD.

That’s a strong statement. If we follow through with his third link we learn:

Advances in statistics better allow us to determine whether a given phenomenon (eg, ADHD) is discontinuous with trait distribution in the general population versus more consistent with the extreme end of a continuum. Evidence of the presence of a taxon, ie, an “entity with real category boundaries that exist independent of social convention or descriptive convenience,” would provide support that a categorical entity exists. Attention deficit hyperactivity disorder does not appear to have a taxon.

My confession here is that my original thesis for this essay was maybe we should treat ADHD as a spectrum. It seems I’m a bit late. But my confusion is not unfounded:

[In the] DSM-5, ADHD retains a categorical structure defined as “inattention and/or hyperactivity-impulsivity” symptom clusters associated with functional impairment.

There’s something strange afoot. Why is ADHD a threshold diagnosis?

Framing the severe end of a continuum as a categorical phenomenon might have some heuristic value and, it has been argued, aligns better with a human bias toward categorical thinking.

It is true that humans are deeply accepting of artificial taxonomies. The age gradient between 16 and 20 years old is smooth, but a single arbitrary moment differentiates between adult and child. The precision of this schism isn’t a function of natural evidence, but rather cultural convenience. A similar categorical imperative may exist for ADHD:

Unfortunately, movement toward a continuum approach might be impeded by administrative forces demanding categorical application. Our medical billing system, for example, requires the application of categorical diagnoses within the provision of assessment and treatment. Also problematic is the requirement for physician- or psychologist-based categorical diagnoses within some school districts in Canada to facilitate children getting access to certain school accommodations or support services. This approach in schools seems even more contrived given that the school population contains children at every gradation along various continuums.

There’s a lot to unravel here, but I will jump straight to the heart of the matter. Threshold categorization has little societal loss when there is threshold treatment. To a philosopher, there’s technically some small gradient of bacteria that is a grey zone between has strep throat and is perfectly healthy. To a doctor, there’s technically edge cases where the cost-benefit analysis for medication by antibiotics is about 50/50. No one cares. The clause, has strep throat is synonymous with takes antibiotics because if the doctor decides some edge case is sufficient for antibiotics she says ‘you have strep throat.’ Otherwise she says ‘you don’t have Strep throat, but if it develops we’ll prescribe antibiotics.’ The uncertainties mirror each other.

This may not be the case with ADHD. My psychiatrist explicitly told me I don’t have ADHD but, to my endless surprise, agreed Adderall was providing me a net benefit. Her points of contention were that my stimulant use was unmedical and unfair. A second psychiatrist within my healthcare system agreed. According to them, there is an entire category of people who don’t have ADHD but do benefit from medication. Are they right?

Priors for stimulant efficacy

An essay in my drafts folder is titled Everything Is An Inverted U. I can’t link it because it’s not published because writing is hard. But I will try to summarize it for you in the meantime:

(Almost) every good thing in biology is only good in moderation. Take oxygen. It’s famously good. Thus more oxygen should be better. But too much oxygen is hyperoxia and that’s ultimately fatal.

It’s probably okay to assume this is a gradient effect. If we graph that gradient we get an Inverted U:

The Inverted U. Suspiciously similar to a normal curve

This is not a rare phenomenon. It’s everywhere. After oxygen, water is the most generically good thing I can think of. It’s almost always culturally laudable to convince kids, old people, or mothers of three to drink more water. But Drinking Water = Good is not an axiom. Past the Goldilocks point water has a deleterious effect: convince our mothers of three to drink enough of it and they’ll begin to desalinate; push them too far and they’ll die. [sad link]

There are exceptions to the inverted U for narrow effects. Opioids dull pain. And more opioids dull more pain. But for any given amount of pain a person is in their ideal opioid consumption still has a Goldilocks point. This is true even ignoring side effects/addiction/morality because pain isn’t bad. People with Congenital Insensitivity to Pain and Anhidrosis often don’t live past the age of 25. That’s right: dead again.

The pattern here is that narrowly defined traits like pain, or axonal firing, or maybe focus can be maximized. But complex traits, the things we actually want, maximally adaptive pain signaling, maximally functional thinking, and maybe ability to concentrate are all systems that obey an Inverted U pattern. It’s a remarkably robust biological law.

Here is a graph from Hebb (1955) proposing an inverted U for arousal.

This is a foundational graph for the concept of the inverted U. It’s coincidentally on topic for the larger thesis.

More Stimulants → More Arousal is a good heuristic. The theory here is that high levels of arousal are inherently the mental state known as anxiety. This is intended as a feature. Note the location of the peak of the curve is a function of the goal: optimal arousal shifts rightward as we go from sleeping, to socializing, to hunting, to fighting a bear.

More Arousal → More Focus is also a pretty good heuristic. You can expect the median distraction point to reflect this. A particularly bright bird may steal your focus during socialization, it may take someone calling your name to break you out of concentration when hunting a squirrel, and you likely won’t notice your friend Bobby accidently shooting you in the buttocks until after your fight with the bear.

But the arousal curve and its correlates are not perfectly adapted for the modern world. Novel activities like math may benefit from bear-fight levels of focus but warm-blanket amounts of anxiety. It’s not clear how to induce such mental states.

It’s also unclear from this model why the eponymous symptom of ADHD is hyperactivity and not malaise. The short explanation here is that there’s no consensus model that cleanly wraps together the various symptoms of ADHD. For me, this is an excuse to move down to create an even more fundamental paradigm.

This can be done by giving focus the opioid treatment. What happens if we remove side effects like anxiety and slowly increase a theoretically pure focus?

Starting at low, we may go from flailing about in a classroom, to sitting quietly in our chair fidgeting, to being locked-in on our math homework, to being locked-in on perfect penmanship during our math homework. There’s an inherent narrowing of temporality here. If you’re unfocused you will likely find yourself thinking about things not in the here and now: cookies, a terrible embarrassment from your childhood, or maybe the end of the world. Narrowing focus involves concentrating on your own personal present. But things get weird as this temporality is pushed to extremes, e.g., math problem → lettering → → → the entirety of your reality is whatever speck happened to be in front of you → beyond the event horizon, there’s no past, no future, only focus.

Maximizing pure focus won’t get us an A on our math homework. A functional goal is needed: ability to concentrate. This is optimized by shifting focus left and right on the X axis. The general concept looks like this:

This is an Inverted U. It’s clear and clean and can be immediately be used to make predictions. But what if I made it confusing instead?

Please excuse my terrible graph

To back up a second, I think many people seek out Adderall hoping it will make them smarter. They may be assuming an upward shift in an ability to concentrate curve like the one above. Such a transformation is highly unlikely in the same way a pill improving across the board IQ is highly unlikely: there are too many mechanisms at play, and each has its own inverted U.

But smarter people do exist, and it’s plausible many of them have upwardly shifted curves similar to the one above. So there is an important takeaway: even a basic model of ability to concentrate needs another variable.

The simplest addition might be the complement of focus: divergence. The sum of focus and divergence determines the location of the curve on the Y axis. (allowing for the above shift.) But most of us travel along our curve in a (mostly?) zero-sum exchange between focus and divergence. That latter point is the one of interest. Modeled, it looks like this:

Our jargon here is semantically close to colloquial English. To read this sentence, you must focus on the words, you must remember their meaning long enough to string them together in a complete idea. But to understand this essay you need divergence to shift from one idea to the next. You must connect the limited written instructions on this page to a vast empire of prior knowledge. You absolutely must bounce ideas around in your brain a lot. An example of the importance of divergence:

You and your date have just finished dinner. You’re feeling each other out, each of you not sure what the next move is. Your lovely lady decides to take the plunge “would like to come back to my place for some coffee?” She asks, eyes sparkling. You focus-in on the question and reply “Do you have decaf coffee? It’s much too late for me to drink caffeine. There’s a Starbucks located optimally between our two homes, lets go there.”

Oops.

Divergence isn’t just a tool for tacit communication around social taboos. It is a necessary component of rational thought. Position trees in chess are gigantic. Trying to brute force them isn’t slow on a human scale, it’s slow on a the universe will only last so long scale. Frequent divergence is critical. The concept looks like this:

Do chess computers work this way?

Divergence is necessary, but too much of it is bad. A particularly unfocused chess player may get distracted by an obscure move in a less promising tree. Worse, his line of thought may deviate entirely from prior goals. What would Kasparov play here? He sure hates Putin. Does Putin play chess? Does he have accurate self assessment at measurable skills compared with the general population or Western leaders? Is this domain specific? Hmm. Whose move is it?

This distracted chess player doesn’t have low focus, he has low net focus (Focus - Divergence.) These two phenotypes often express identically to an outside observer. But the high divergence phenotype is theoretically adaptive for complex tasks that carry sufficient interest. If our chess player above cared more about chess than he did politics, his divergence would lead to more novel play than a balanced chess player of equal skill. He may not be optimized for match play, but he might be an ideal study partner.

This is a purely speculative framework, and it has produced what for me is a very intriguing phenotype. The interplay between focus and divergence isn’t entirely outside of the realm of science. From Kasof 1997: “Charles Darwin attributed his insights in part to his tendency to notice irrelevant stimuli, which he was so unable to screen out that he required absolute silence to work.” Was Darwin high divergence? Is this a trait that leads to underperformance in school/work by ‘gifted’ students?

I dug a little further here and… maybe? A young Charles was a very average student at an average primary school. Janet Browne, in Charles Darwin Voyaging addresses this somewhat apologetically:

[Darwin’s father] consequently reacted with equanimity to the news from Shrewsbury School that Charles was not much of a classical scholar. From his own scientific interests and the pleasant hours shared with his son in the garden and carriage he could see it was only Butler’s emphasis on traditional learning that generated indifference.”

High divergence may also be a promising variable for relative outcomes in aristocratic tutoring.

There’s something a bit cutesy here. The emergence of the high divergence phenotype aligns well with cultural beliefs that people with ADHD aren’t disabled but differently-abled. Such nice-isms often conflict with consensus science, for example, Russell Barkley in the authoritative ADHD in Adults: What the Science Says:

Some authors claim that adults with ADHD are more intelligent, more creative, more “lateral” in their thinking, more optimistic, more entrepreneurial, and better able to handle crises than those without the disorder. Similar advocates of adult ADHD have gone so far as to assert that the disorder conveys some positive benefit. To our knowledge, none of these claims have any scientific support at this time. Most, in fact, are refuted in this book.

Unravelling this is a matter of perspective. Looking at an individual we can assume focus and divergence are partially zero-sum. But at the group level we cannot expect those who are low focus to necessarily be high divergence. The compatibility of these perspectives is easier to understand in exercise science:

Let’s assume a trait fitness that is a combination of endurance and speed. As fitness maximizes the relationship between endurance and speed approaches complete zero-sum-ness. Here is an unexhaustive list of mechanisms: fast twitch vs slow twitch muscles, overall muscle mass, relative muscle location, water weight (for lactic vs aerobic output). The phenomenon is robust: it extends through genetic and environmental factors.

On a group level? For [lack of] clarity, let’s copy the general methodological setup used for ADHD. Start with a large, random group of the general population and have them race 10km. Take the lowest 5% of times and label the runners as having Endurance Deficit Disorder. Separate this group from the Endurotypicals and have them each run 100m sprints. Analyze the data.

Between the Endurance Deficit group and Endurotypicals, who will be speedier on average? Endurotypicals. But from which group did the very fastest runners come from? Ok, still Endurotypicals. Does this give us any real world information about the relationship between speed and endurance? No. [Have we learned anything about Endurance Deficit Disorder? I’m struggling to find much value in this methodology.]

To be clear, the whole Focus vs Divergence framework is deeply theoretical. It’s not meant to make precise predictions. It’s a base concept that can later be built off of. For now it makes basic predictions about how certain pharmaceuticals might work.

Formal Theory. Re: Adderall and Cognitive Enhancement

A first principles type model for a drug that increases focus should look this:

We can expect those who have low focus to need a high dose of the focus drug to give them optimal ability to concentrate. We can expect people with ok focus to benefit from a medium dose. We should also note that this graph predicts that people who are slightly underfocused will perform better with 1 unit, will perform identically with 2 units (null hypothesis confirmed, p<.05) and they will perform worse with 3 units. For any right-shifted individual the drug effect curve will be an inverted U.

Some Science

I’ve tried to make myself familiar with the literature here. Historically speaking, I’m bad at this sort of thing. Jargon filled papers in neuroscience are painful for me to find, read, and digest. I can’t be certain I haven’t missed something significant from the literature: sometimes overlooking a single search term leaves a whole giant branch unexplored. But I have gotten to the point where increasingly on topic papers feel increasingly predictable. I’m hoping I’m accurately summarizing a good portion of the efficacy literature here.

The overwhelming consensus is that stimulants are cognitively enhancing for those with ADHD. We can take this as a given and focus on ‘neurotypicals.’ Here, the data are nebulous. Results for cognitive enhancement generally hover at or slightly above null, but with contradiction. I’m going to bombard you with generally representative conclusions:

The popular opinion that MPH enhances attention was not verified through the meta-analysis. This result is in concordance with most of the individual studies, which reported either no effects, or even negative effects, such as a disruption of attentional control. The positive, albeit solitary result for memory enhancement seems at first insufficient to explain the reported high prevalence of use of MPH for non-medical reasons. [source]

There is very weak evidence that putatively neuroenhancing pharmaceuticals in fact enhance cognitive function. A recent report from the British Academy of Medical Sciences concluded that there was very limited evidence for cognitive enhancement in healthy individuals and effects on vigilance, verbal learning and long-term memory were small under laboratory conditions [6]. It is uncertain whether effects in laboratory tests translate into benefits in everyday life, especially when these drugs are used regularly over extended periods [7]. It is also unclear whether people who function well obtain much benefit or whether increased performance in one cognitive domain is at the cost of deficits in others. [source]

It remains unclear whether stimulant medication has the same effect on healthy individuals as for those with ADHD. [source]

Results revealed that Adderall had minimal, but mixed, effects on cognitive processes relevant to neurocognitive enhancement. [source]

In summary, the present pilot study indicates that a moderate dose of Adderall has small to minimal effects on cognitive processes relevant to academic enhancement. [source]

Thus, the rumored effects of “smart drugs” may be a false promise, as research suggests that stimulants are more effective at correcting deficits than “enhancing performance.” [source]

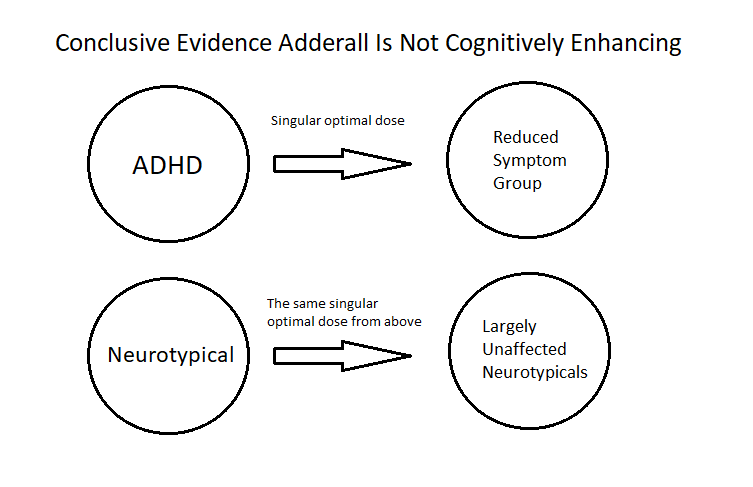

This is my visual summation of much of the science:

This is hyperbolic, but it does highlight problems in the current scientific model. The usage of high doses in the neurotypical population is of particular concern. Here’s a fairly representative sample from a study included in the reviews above:

10 healthy subjects were enlisted to take 0.25 mg/kg d-amphetamine or placebo before performing a working memory task while undergoing fMRI scanning (Mattay et al., 2000). Each subject participated on placebo as well as on drug to establish a baseline score for comparison of the drug effects. Subjects who had low working memory on placebo showed improvement while on d-amphetamine for the most challenging parts of the task; those with high working memory at baseline were impaired by the drug.

This is officially a ‘low/medium’ dose, but .25mg/kg d-amphetamine is about 20mg for my kg. I take 5mg mixed, and l-amphetamine is 2-10x less potent, so I take the equivalent of 4-ish mg of d-amp [Amphetamine is Racemic, Adderall is mixed, d-amp is the potent enantiomer, and these details aren’t important for this essay.] The takeaway: this is ~5x my typical dose, and I’m not a midpoint neurotypical.

Picture however much coffee your kinda/sorta ADD friend drinks, multiply it by 5, and predict what would happen to your standardly-stimulated non-coffee-drinking friend if she chugged this whole urn before a math test. Do you expect her to acquire intellectual super powers? Spoiler: not unless having to pee really really bad is an intellectual super power.

If you’re skeptical that the bulk of the science uses doses that are vastly too high, so am I. This is a particularly difficult issue to dive into, because doses are typically not cited nor heavily discussed in papers. But all the evidence I’ve found leads me to this conclusion. Another example: 20mg mixed amphetamine was the ‘low’ dose threshold in one of the major reviews. These ‘low doses’ often have the greatest effect. Given the inverse U, this is strongly suggestive that even lower doses may be more effective for some individuals. It’s really hard to find ‘lower’ dose studies.

I insinuated this in the graph, but my assumption is the dosing for neurotypicals is largely copied from the much better studied/funded ADHD literature. This type of thinking might be another side effect of the threshold categorization of ADHD, but there are a lot interrelated inertial factors that may be at play here: the lack of funding for “cognitive enhancement,” difficulty of working with human patients, stimulants are schedule II drugs and require a ton of paperwork, et cetera.

Getting a dose curve requires a very large and well controlled population sample. The perfect science here would include finding dose curves for hundreds of individuals for a variety of tasks, and then searching for predictive patterns. This would take a monumental effort and require monumental funding from organizations cognizant of the existence of an entire spectrum. Currently, this far outside of political/social reality.

Until that science is done (or someone directs me to a huge chunk I haven’t found?) we’re left with a smattering of low dose studies. For selfish/lazy reasons, I only looked into amphetamine dosing at or below my current dose. There are extremely few studies that have data below 10mg d-amp. I found only a handful. Two of these may carry a disproportionate influence. They looked at the heuristics of ‘tapping speed’ and ‘subjective ratings of hunger’ respectively, and they both found null results. This suggests low dose amphetamines don’t pass through a measurable threshold. I’m skeptical.

To be fair, there are often models 100s of papers deep that explain why things like ‘tapping speed’ or ‘subjective ratings of hunger,’ are good heuristics for more interesting threshold effects like cognitive enhancement. Forgive me for not having taken this plunge. Anecdotally, what I’ve always told people is that Adderall makes it more likely that I’ll ignore eating (if I’m focused on another task) but it doesn’t affect my appetite or enjoyment of food while I’m eating. This fits my intuition on how arousal and hunger should interact. People are certainly less hungry at a fighting a bear level of arousal, and this is likely true of the opposite extreme of arousal, sleep. But I would expect hunger and arousal to be largely independent in normal circumstances. A quick look at hunger by hour confirms the correlation isn’t untenably high.

The underwhelming evidence that Amphetamine has a high threshold dose is countered by underwhelming evidence it has an extremely low one: I found a singular low-dose study that looked for threshold effects more directly related to focus. It’s from 1973 and a handful of participants listened to boring audio for hours on end and were measured for detection errors. The study found both 1mg and 2mg d-amp reduced ‘audio detection decrement.’ This is an order of magnitude less amphetamines than other ‘low dose’ studies. It’s a tiny piece of data, but it may deserve more attention.

The Inverted U is well established in psychopharmacology, and there’s plenty of references to it in the cognitive enhancement of neurotypicals literature. I’ll start with cleanest graph I found amongst the one million papers I read. Please admire it in all its splendor.

Unfortunately the test subjects here are mice. The measured variable freezing response, is a heuristic for improved memory. The first author of this study, Suzanne Woods, also first authored my favorite review. She addresses the inverted U:

One common graphic depiction of the cognitive effects of psychostimulants is an inverted U–shaped dose-effect curve. Moderate arousal is beneficial to cognition, whereas too much activation leads to cognitive impairment.

There are, to my continued surprise, no large non-review studies that attempt to find the inverted U in human neurotypical populations. There is a lot of evidence such studies may yield interesting results. Here’s another bombardment:

A striking observation is that the low dose exerted the highest proportion of effects on cognitive performance in this domain, followed by the medium dose, with the highest dose showing the lowest proportion of effects. [source]

Across the domain of verbal learning and memory the low and medium doses exert more cognition enhancing effects than high doses. [source]

In sum, the evidence concerning stimulant effects of working memory is mixed, with some findings of enhancement and some null results, although no findings of overall performance impairment (Smith and Farah 2011). However, the small effects were mainly evident in subjects who had low cognitive performance to start with, showing that the drug is more effective at correcting deficits than “enhancing performance.” [source]

The highest proportion of significant effects was observed with the medium dose. This was also reflected by a subset of tests, namely the spatial working memory tests. It is possible that the MPH-effect follows an inverted-U-shaped curve as a function of dose [source]

Adderall lead to mixed effects including both impairment in cognitive functioning (working memory) and improvement in attention performance. These findings are generally consistent with meta-analytic findings by [29] who found small effects of amphetamine and methylphenidate on working memory and inhibitory control in healthy adults but concluded that the effects are “probably modest”. It is important to note that a robust body of literature exists that supports the positive effects of prescription stimulants on neurocognitive functioning in children and adults with ADHD (e.g., [14,54,55]), underscoring the importance of baseline impairments in performance relative to improved effects. [source]

This next one is especially interesting:

Kimberg et al. (1997) [gathered data for] the dopamine agonist bromocriptine. Their sample’s mean performance on an executive function battery was numerically almost identical on drug and placebo, similar to the findings with three of the tasks in this study. However, after a median split on working memory span, it was found that the lower half of the participants improved significantly on the drug and the upper half declined by the same amount. A similar, though less extreme, pattern has been found in studies of the effects of methylphenidate and amphetamine on executive functions, including working memory (Mattay et al. 2000, 2003; Mehta et al. 2000) and inhibitory control (DeWit et al. 2002). In these studies, participants who performed worst on placebo tended to improve the most with stimulant medication, whereas those who performed best tended to show less improvement or even show worse performance with the stimulant. [source]

That last quotation is from the abstract of a paper by Martha Farah on Adderall and creativity. In a section titled Individual Differences in Drug Effect, she addresses the concept in her own research.

Adderall may affect performance differently in different subjects, depending on their baseline or placebo level of performance. The dependence of a drug effect on a participant level of ability can entirely mask the effect of the drug when the whole sample of participants is considered together.

Bingo.

There’s more:

In the Remote Associate Test, participants obtained on average 5.07 out of 15 correct in the placebo condition and 5.00 in the Adderall condition.

This is a null result. But:

Placebo performance predicted the size of the drug effect in the [same] Remote Associate Test: p<0.001. The drug enhanced creativity for the lower-performing participants and impaired it for the higher-performing participants.

[There’s an author-acknowledged confound of regression to the mean here. The effect holds, but with a small-ish asterisk.]

That’s an enormous finding.

For convenience I’ll repost my graph here:

The summary of the science seems to be cognitive enhancement only works in low performing groups but it generally misses the takeaway maybe more competent people should take lower doses.

I won’t linger on that here because there’s another result in the same study that’s distracted me. On the same Remote Associate Test:

Adderall enhanced performance for those who took it second (from 4.00 to 5.70 correct on average), whereas it impaired performance for those who took it first (from 6.00 to 4.38 on average).

This is a relatively small effect in a single, probably unrepeated study, and it only showed up on 1 of 4 tasks. But I find it deeply explanatory. In the context of focus vs divergence, we would expect divergence to be adaptive for novel tasks. It’s beneficial to try different things and see what works. But once a good strategy is found continued divergence becomes maladaptive. The optimal tactic is to focus on what’s working. This may be a critically important pattern.

Conclusions and unfounded speculation

Steven Pinker once mused that people might just be bad at holding the concept of a normal curve in their mind’s eye. If you’re one of those people, I strongly agree with you that: ADHD is biological, it has threshold symptoms for every arbitrary shift in diagnostic criteria, it can be debilitating, people with ADHD need a lot more help than those without it, et cetera. The extremes of polygenetic spectrums can be bad places to be. But taking an arbitrary point on the spectrum, e.g., your son Mike who “absolutely needs Adderall to function” and building it into a wall on which Mike stands on one side of, and everyone a little shifted from Mike stands on the other isn’t an ethical act.

The unexpected truth for Mike’s parents (and much of the medical establishment) is that the morality of treating ADHD isn’t “curing” a “disease.” Instead, the morality of treating ADHD comes from shifting those on one side of a curve towards its functional peak. The fundamental moral principle at play here is *drumroll* cognitive enhancement. It’s a truism we should give Adderall, Methylphenidate, pure Methamphetamine and/or Space Aids to anyone who wants it if benefits outweigh costs. That if is critical. (and rare vis a vis Space Aids.)

But prescription stimulants aren’t precision devices. They operate with similar sophistication (arguably less) than the coca leaves people have been chewing for thousands of years. The prior that dousing every inch of your brain in neurotransmitter-soup is cognitively enhancing should be rather low. Further, there’s all the reason in the world to think it’s especially difficult to get things working better when they’re already working well. But the devil is in the details.

My guess is that small doses of stimulants will improve performance on rote tasks throughout the ability to concentrate spectrum. The emphasis here is rote tasks. A lot of these are so boring as to not be subject to the inverted U (see the original Yerkes-Dodson law for reference.) There are a lot of rote tasks in the world, and we can see why demand for stimulants amongst those tasked to do them is high, e.g., students.

But for increasingly complex tasks, especially real world tasks requiring various aspects of intelligence, the phenomena of the inverted U means that high functioning individuals are unlikely to generically benefit from any pharmaceutical. Trying to parse this out scientifically will likely yield some deviously misleading results:

A programmer might perform better under the influence of Adderall 90% of the time. Similarly, 90% of programmers might perform better under Adderall. But a minor creative improvement from the 10% may provide a novel and replicable increase in efficiency. If the task is iterated another 10, 100, of 1,000 times the benefit of the improvement grows commensurately. A solitary moment of genius may be worth a thousand moments of distraction.

I’m being a little unfair here. I’ve emphasized that stimulants shift focal intensity, but do not lift the ability to concentrate curve. But is probably not entirely true. Some individuals do experience overwhelmingly positive effects. Snapshots of my own life before and after usage resemble a dubious weight loss ad. Before: [poor lighting and] scrumpled notes and a messy house. After: [a bright summer day and] long drafts and sparkling countertops. But read the fine print: results not typical.

One of the most interesting posts I have read. I didn't need any convincing because I always thought the threshold model was stupid. And that fallacy isn't only limited to the disease model, it's everywhere.

"Why should I exercise? I am already healthy."

"Why should I reduce spending? I am able to pay my bills just fine."

The `Net X = Apparent X - Complement of X` is a model I can see being useful in many domains, as well and is probably one of the more applicable world models to carry around.

Sorry for this long comment, I honestly am still learning what is/isn't standard etiquette when it comes to comments. I don't do it often!

This was very interesting, in particular your sections on "focus v. divergence" and summarizing the studies on whether Adderall is cognitively enhancing for non-ADHD people. I wanted to share a thought I've had on this topic, that I revisited after reading your opening anecdote about being diagnosed w/ ADHD, then...uh, cured, I guess, by a the CPT test, haha.

I work in psych and enjoy following contentious issues therein. I have reread Scott’s old post on Adderall. I have read and listened to Nassir Ghaemi’s thoughts on the validity of Adult ADHD (he’s very skeptical). I would put your helpful essay somewhere in that discussion, for sure.

One aspect I haven’t heard written out at length is something like: To diagnose ADHD in kids, we often will ask about domains of functioning i.e. “OK, your kid has reported symptoms of ADHD. As a clinician, yeah, I guess I see those symptoms now in my office. But! Is your kid doing poorly in school and do his teachers also see these symptoms and attribute his poor academic performance to said symptoms? Do you, the parents, see these symptoms at home and find that they impair your kid’s function there as well?" This is more or less the Vanderbilt screening tool for ADHD - the parents fill one out, the teacher fills one out, and then the clinician sort of adds those two together, along with their own clinical observations (very rough approximation of the process, but pretty accurate...assuming ANY assessment forms are used at all). *Sorry, I am struggling to use consistent pronouns in all of these different examples to come, please bear with me.*

This is, I guess, fine for kids. The reason, whether explicitly stated or not by clinicians and the researchers who make assessment tools, is that school and home life represent a kind of a standardized thing that all kids have to go through…kind of, lets not go into differences between schools’ curricula/teacher competency etc. To put it another way, if the average academic experience of students across the U.S. were suddenly, radically altered…we should expect that rates of ADHD would also be radically different, in one direction or the other. Right? Leaving aside fMRI/bio markers/whatever, we simply would not have such a thing as ADHD as we know it if we didn’t have a mostly standardized trajectory along which we expect kids to follow (or deviate from and get diagnosed with ADHD). Suffering, especially when it comes to young kids with ADHD, is not diagnostic of the disorder. It's mostly assumed to be ego-syntonic i.e. the kid feels fine and is happy, except for when teachers yell at him or his parents yell at him for doing ADHD-kid stuff.

So, when it comes to adult ADHD, the difference now is that there is no standardized trajectory for an adult in the same way that there is for kids. An adult (who may have a brain consistent with adult ADHD) can choose a career path that is super easy, if they want (fill in this blank with whatever probably low-paying job you think is really easy to do). Or, potentially, an adult can swing for the fences and try his or her hand at a job that’s hard but they feel really passionate about. No, this for-the-fences-swinging adult was never diagnosed with ADHD as a kid, that’s true. Whatever academic experience they had K-12 may have just never crossed their own particular threshold for focus. However, now that they have chosen a life path that is “harder” than what their unique amount of focus/impulsive-control can muster…they have symptoms of ADHD. And yeah, this would also apply to social/family life as well. You might choose a monk-like existence where you tailor your every waking moment to jibe nicely with your own unique brain OR you might have kids and dynamite to hell any chance you had of structuring each day to suit your needs. There again, an adult-level life choice just swung you from “No ADHD” to “Wow, yes, ADHD, for sure"...the same way it would for a kid shoe-horned into a particular trajectory would when they enter Grade ## and start struggling with everything.

If any of this makes any sense at all, I just wonder: What’s the point, then, in being reflexively skeptical of adult ADHD (as Ghaemi is) or even having to say something like “Well, you’re neurotypical, but may benefit from some low-dose Adderall, I suppose?” Being “neurotypical” in the ADHD sense would then be sort of moot. No, maybe an ADHD adult didn’t “neurodiverge” as a child, under those specific/fairly standardized childhood constraints, and they didn’t get diagnosed with ADHD. But why can’t it be said that this person DID become neurodivergent NOW as an adult, when they chose a life that exceeds their baseline capacity for focus and self-control? I don’t understand how the general ADHD-for-kids paradigm is any different. It’s just that in the case of an ADHD kid, they didn’t choose to have to track a standardized trajectory of intellectual growth, whereas an ADHD adult chose their own particular trajectory of intellectual growth. In either case, the brain in each case is in over its head. Haha, yikes.

Yeah, of course, the entire idea of applying the term “neurotypical” or “neurodivergent” as it relates to ADHD just stops making any sense, if I’m taking myself seriously on this topic. This is all a long way of saying: I agree with you. Give people Adderall if it helps them and the benefits outweigh the risks.